In most cases, this is an easy question. The time and effort are what matter, not so much the distance. So I almost always say, stick to the duration not the distance. But I could see an argument where things get tricky when you're SO far off original pace. Like normally we're talking about 10-30-45 sec/mile "off" pace. But 2 min/mile is quite a bit more. So does the dramatic reduction in pace/effort offset the extra time. For instance, how does an 8.85 mile 17:14 paced run compare to a 10 mile 15:00 paced run? Does the 12% change in distance get offset by the 13% change in pace? And how different is a 10 mile run at 17:14 (171 min) than a 10 mile run at 15:00 (150 min)? That would be a 14% increase in time.

So I decided to take a highly

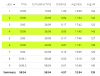

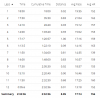

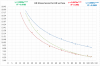

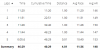

theoretical stab at it. I'm making the assumption that your HR Stress Score per HR is the same for every relative pace. I don't know that to be true. Second, I'm using some of my 30/30 sec run/walk with Gigi to get an idea as to what kind of HR SS/hr values I get for paces wildly slower than my normal paces. I also adjusted the pace tree based on what my current fitness was at that moment in time as well. I then plotted these all against each other on a graph, and then used a power line of best fit.

View attachment 545154

View attachment 545153

The green and red lines are mine. The blue line is yours. The shape of green and red diverge a bit on how they're using their super easy paces. But your blue and my red aren't terribly different from each other when you look at the slope of the curve. So a 17:17 pace would be a TSS/hr of ~23 and 15:07 pace would be a TSS/hr of ~31. So a 171 min run at 23/hr is 65.5 TSS and a 150 min run at 31/hr is 77.5 TSS. So the 150 min run at a 15:07 min/mile would have a higher TSS than the 17:17 min/mile run of equal distance. For the 17:17 min/mile run to be of equivalent TSS, it would need to be 3:22 long or 11.7 miles in length.

TSS is not the end all be all, but simply an evaluative tool for training plan development.

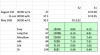

One caveat to this comparison. Mine is road vs road and yours is road vs MEGA trail. So where those efforts are for your on the trail are definitely going to be shifted downwards. The goal effort for your trail runs is a 13 min/mile. The actual adjusted expected pace for the 12/20 run was a 15:15 min/mile. So if we adjust your trail runs down to GAP effort levels, then we should be comparing 13 min/mile pace (or 15:15 training plan pace) to 15 min/mile pace (or 17 min/mile actual pace with niece). So that would be 45 SS/hr vs 31 SS/hr. Or 112.5 planned vs 88.4 actual. Again to make them equivalent would be 3:37 hours of the 17 min/mile paced run, or 12.8 miles.

Now let's compare 5k Pace/Effort to 10k Pace/Effort. 5k pace is 104 SS/hr and 10k pace is 99 SS/hr. A difference of about 5%. The difference between the paces is about 4%. Despite my ability to hold 10k pace for nearly 200% longer than 5k pace. So this is where I think the comparison above could be faulty. Because a hard 10k is a TSS of 66 (40 min run at 99 SS/hr) and a hard 5k is a TSS of 35 (20 min run at 104 SS/hr). But there's no way I'd argue that doing the equivalent TSS of the 10k in a 5k would make them equally difficult. Because that would require me to do 38 min of my 5k pace (or 5.7 miles of 5k pace). Yea... something seems off with that. That's why intensity matters when evaluating what the actual impact of a run is. And I haven't seen a calculation quite taking that into account as well. I mean there is something to say that it takes minimal time to recover from a 5k compared to a 10k, but in the moment, they both suck to run at those paces for far unequal amounts of time.

So I think cutting off your runs at the desired duration is still the safer choice. I think it's better to be safer at the end of the day. I'd rather be a little undertrained than a little overtrained. Of course I say that, and then some days I can't convince myself to get out of the way of myself.